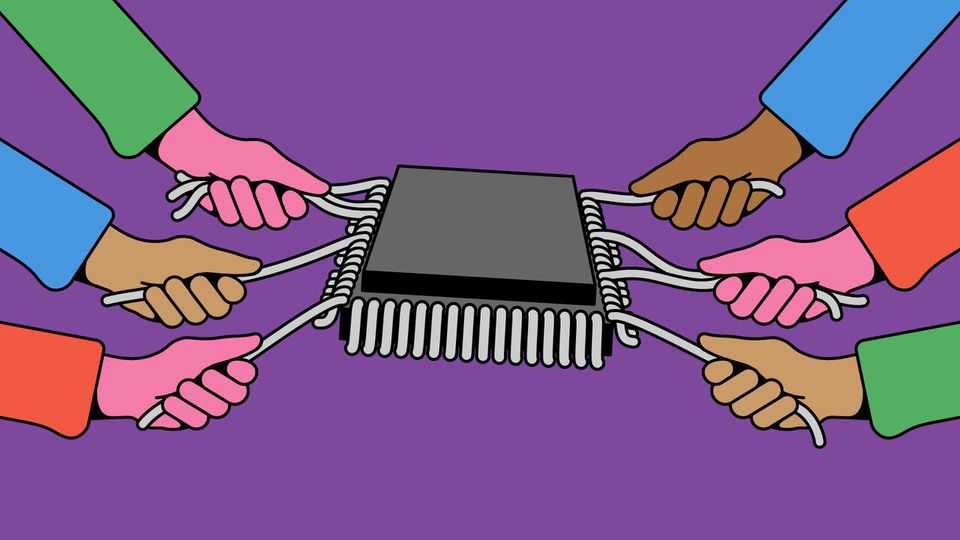

The AI supply crunch is here

Choke points are changing AI’s economics

Artificial intelligence has a supply problem. As the world gorges on tokens, the snippets of text by which the output of a large language model is counted, it is running short of them. Weekly token consumption quadrupled between January and March, according to OpenRouter, a marketplace for AI models, partly because of the growing use of coding tools. The industry cannot keep up.

At model-makers and tech giants, rationing is afoot. Anthropic, maker of Claude, recently adjusted its terms to deter heavy use during peak hours. Amazon says that “capacity constraints” have limited its growth. Sarah Friar, the finance chief ofOpenAI, developer of ChatGPT, has said the company is not pursuing every opportunity because it does not have enough processing power (or “compute”). It recently scrapped its video-generation model.

The consequences of the supply crunch could be far-reaching. A world of scarce compute will shape the economics of AI, changing everything from the allocation of profits to the incentives to use the technology.

Adding AI capacity quickly is hard. Particularly in America, local opposition to new data centres has slowed their construction. Shortages of transformers, switchgear and gas turbines cause delays; some of this equipment can take two to five years to arrive. The tightest bottleneck is in processors. Chips for AI, such as those designed by Nvidia, the world’s most valuable company, remain scarce. The squeeze extends to other types of silicon, too, including memory chips and central processing units (CPUs). Few of these constraints will ease any time soon. Supply chains take years to expand and hardware-makers are still investing more cautiously than the hyperscalers they supply.

When hardware is expensive the size of your balance-sheet matters more than ever. Whichever part of the supply chain you look at, only a handful of firms have the financial muscle and bargaining power to lock up the hardware they need. This year the five data-centre “hyperscalers”—Amazon, Google, Meta, Microsoft and Oracle—will together shell out more than $750bn on capital expenditure. OpenAI and Anthropic have announced hundreds of billions of dollars in partnerships and investments. Nvidia is said to have bought most of the memory it will need in 2026 and part of 2027 well in advance. It has also invested across a range of tech firms to shore up its supply chain.

The greatest profits will be found at choke points. The AI boom has especially benefited Nvidia and TSMC, the Taiwanese manufacturer that makes almost all of the most advanced chips. Chip manufacturers’ pricing power has become as enormous as their transistors are tiny. Nvidia’s gross margin is about 75%, up from 60% in 2019. TSMC’s gross margin is above 60%, roughly twice that of many other contract manufacturers. The hardware giants also have sway over who gets scarce kit, though they deny picking favourites.

High prices are causing software makers to do more themselves. Custom chips can cost about half as much as buying from Nvidia. But they are not easy to design. Among the software firms doing so, only Google has managed to create a viable alternative in large volumes, and its effort began more than a decade ago. Displacing TSMC is harder still. Other chipmakers, including Intel and Samsung, have struggled to match it at the leading edge. Elon Musk, the boss of SpaceX and Tesla, has floated a plan for a “Terafab” to rival TSMC. Its estimated cost is a fantastical $5trn-13trn.

The last consequence of the crunch will be to slow the uptake of the technology. So far the AI boom has rested on the cheery assumption that answering queries will only get cheaper. And it has: “inference” prices have fallen by five- to ten-fold in a year. In countries such as India, AI firms are offering cut-price subscriptions to lure users. But that obscures how much cash firms are burning through to sustain those falling prices. OpenAI and Anthropic are expected to lose billions of dollars in the coming years. As both prepare to go public, they will be eager to show that they can one day make a profit.

As people find more uses for AI—including applications outside tech, where it is currently most popular—prices will rise. If AI is to transform the economy, demand for tokens will grow by orders of magnitude. As model-makers pass on their rising compute costs, users will have to economise. Today many companies judge themselves by whether or not they use AI at all for a given task. Increasingly, as with human labour, they will have to ask whether they are using AI efficiently. ■